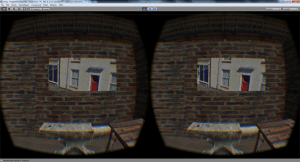

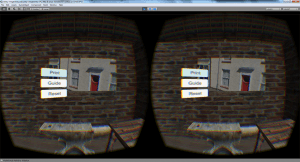

On April 22nd 2015 we were able to take our system for a preliminary test at the Get Up to Speed event in Sheffield. This was an incredibly productive day that allowed us to gather some user feedback about the system which we will use to make some improvements. Overall it seemed to me that users enjoyed their interaction with the system and were interested in where the project would be in the future. Hopefully the response to our final test will be as good as what we received at this event.